Annotating sport performances enables to quantitatively and qualitatively analyze them, and profile athletes to identify their strengths and weaknesses. We designed an annotation and analytical tool tailored to lead climbing analysis, developed with and for the French climbing federation. We leveraged user-centered design methods based on an iterative design cycle mostly fueled by virtual meetings with the federation trainer and analyst to identify requirements and implement essential features over time. We complemented these meetings with two workshops involving them, as well as French athletes competing at the international level, to identify the tool advantages and limitations. This work contributes a list of insights based on the design process and feedback from stakeholders that inform the design of annotation and analytical tools for lead climbing and potentially other sports.

2023

Case StudyVideo AnnotationUser-Centered Design

High-Level Sport: Lead Climbing Lead climbing is a discipline with significant history that was included only recently to the Tokyo Olympics 2020. The goal of this discipline is to climb a wall composed of artificial holds under 6 minutes. Athletes climb with a rope they clip on quickdraws attached to the wall to ensure security in the event of a fall. The score is evaluated based on the number and type of holds grasped, and the ascent duration. Because of the cost and limitations of existing tools, we tailored one to specifically answer their needs for supporting data production and analyzing performances.

Collaborating with the French Climbing Federation (FFME) We started working continuously with the FFME on May 2022 by organizing virtual meetings online every two weeks. We organized two physical workshops during this period to test various versions of the tool and get valuable feedback from professionals. The tool was integrated in a suite of applications designed for the FFME as a part of the PerfAnalytics project. These tools build on the Dash full-stack framework that relies on React.js for the front-end. They facilitate uploading and indexing videos to easily search for performances to annotate. Initial virtual interviews with the trainer and analyst of the federation outlined the tool should have two main purposes: support analysts in quickly annotating a performance, and help them analyze the outcomes of a performance after producing annotations. We listed design considerations to inform the design of the tool. You can find further details in the paper.

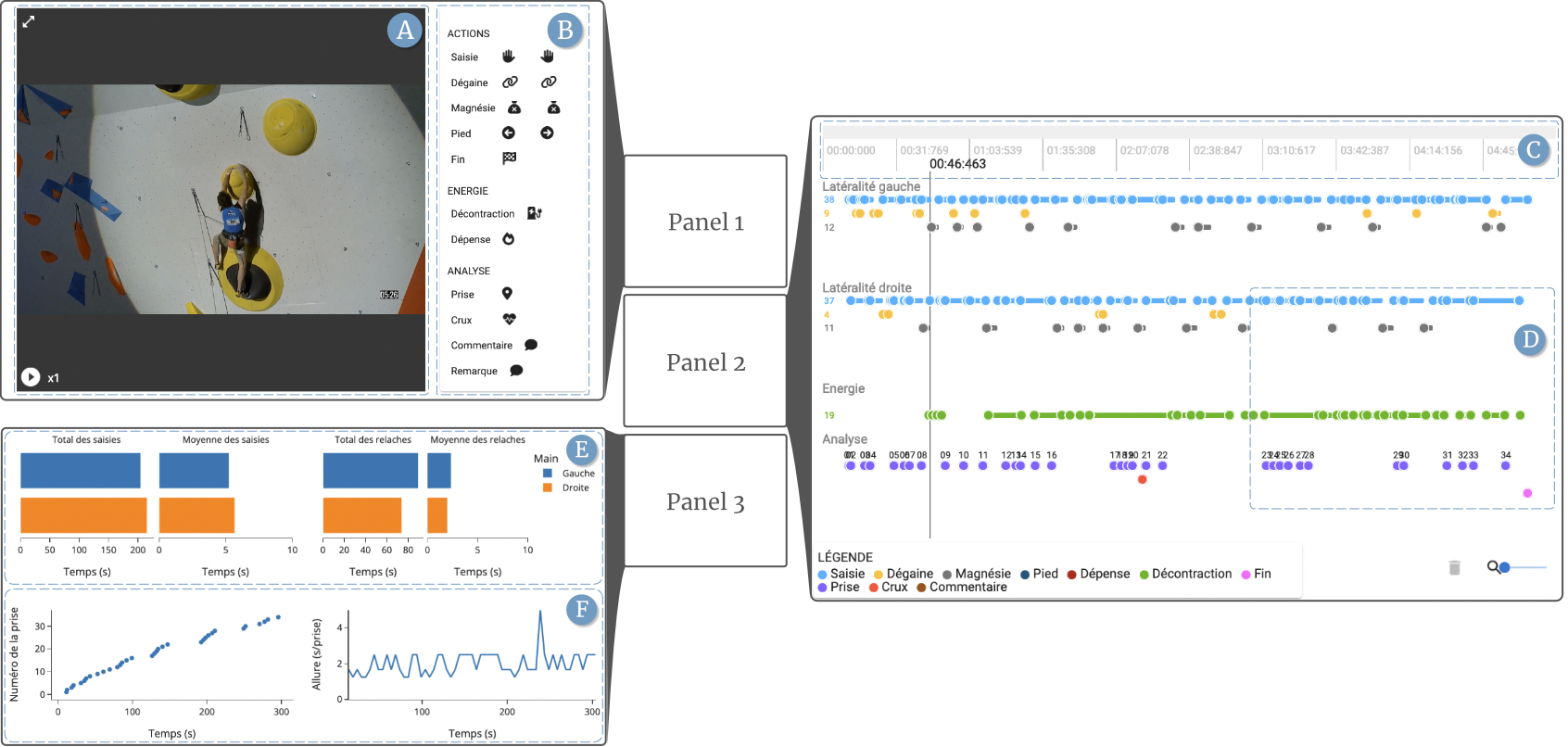

Video Annotation and Analytical Tool Tailored to Lead Climbing The tool consists of three panels: one to play the video stream and create annotations, a second to visualize them, and a third to summarize the quantitative information they represent (see figure above). The first panel presents the performance video stream (a) and a sequencing panel to create annotations (b). The second panel displays the timeline (c) with annotations depicted as circles and lines that represent transient or lasting actions (d). The last panel presents plots that depict the cumulated or average holding and resting times for both hands (e), or the score evolution and climbing speed (f).

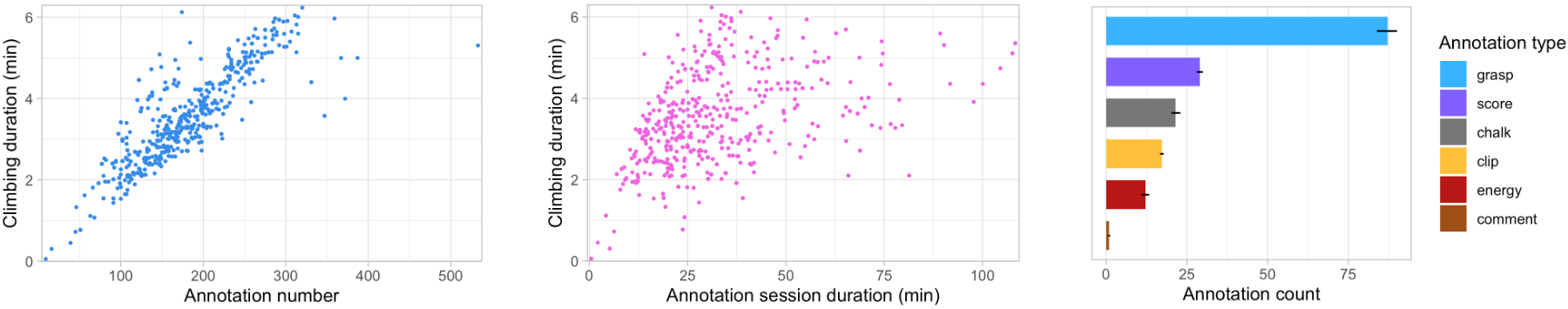

Tool Usage (as of March 18th 2023) The tool is regularly used by the federation to annotate competition videos. We count 493 annotated videos, with a climbing duration of 3 minutes and a half in average (± 1.14 minutes). Annotators produced in average 178 annotations per video (± 64) and analyzed videos for 33.7 minutes (± 18.9 minutes). The analysis duration is estimated, thus not precise, but it indicates that analyzing videos can be long and tedious depending on its content. We evaluated the tool features and the benefits athletes have of using it through 2 workshops. You can find details on that in the paper.

A Case Study on the Design and Use of an Annotation and Analytical Tool Tailored To Lead Climbing Bruno Fruchard, Cécile Avezou, Sylvain Malacria, Géry Casiez, Stéphane Huot CHI'23: Proceedings of the ACM SIGCHI Conference on Human Factors in Computing Systems